We have put together this S3 Cheat Sheet that contains the main points related to the S3 service that are addressed in the exam, each piece of information below may be essential to answering a question, be sure to read all the points.

Objects:

- Objects (files) are stored in buckets.

- Files can be between 0 bytes and 5TB, and has no storage limit.

- Max object size in a single put is 5GB.

- Upload objects in a single PUT operation, you can upload objects up to 5 GB in size.

- Upload objects in parts—Using the multipart upload API, can upload large objects up to 5 TB.

- Files are stored flatly in buckets, Folders don’t really exist, but are part of the file name.

- S3 is basically a key-value store and consists of the following:

- Key – Name of the object

- Value – Data made up of bytes

- Version ID (important for versioning)

- Meta-data – Data about what you are storing

- ACLs – Permissions for stored objects

- When you upload a file to S3, by default it is set private.

Buckets:

- All root folders are buckets and must have a unique name across all AWS infrastructure

- The bucket naming must respect the following:

- The bucket name can be between 3 and 63 characters long and can contain only lower- case characters, numbers, periods, and dashes.

- Each label in the bucket name must start with a lowercase letter or number.

- The bucket name cannot contain underscores, end with a dash, have consecutive periods, or use dashes adjacent to periods.

- The bucket name cannot be formatted as an IP address.

- S3 bucket names have a universal name-space, meaning each bucket name must be globally unique.

- S3 URL structures are http://region.amazon.aws.com/bucketname Eg. (https://s3-eu-west-1.amazonaws.com/myawesomebucket ).

Versioning and Cross-Region Replication (CRR):

Versioning:

- When versioning is enabled, you will see a slider tab at the top of the console that will enable you to hide/show all versions of files in the bucket.

- If you truly wanted versioning off, you would have to create a new bucket and move your objects.

- If a file is deleted, for example, you need to slide this tab to show to see previous versions of the file.

- With versioning enabled, if you delete a file, S3 creates a delete marker for that file, which tells the console to not display the file any longer.

- In order to restore a deleted file, you simply delete the delete marker file, and the file will then be displayed again in the bucket.

- To move back to a previous version of a file including a deleted file, simply delete the newest version of the file or the delete marker, and the previous version will be displayed.

- Versioning does store multiple copies of the same file. So in the example of taking a 1MB file, and uploading it. Currently, your storage usage would be 1MB. Now if you update the file with small tweaks, so that content changes, but the size remains the same, and upload it. With the version tab on hide, you will see only the single updated file, however, if you select to show on the slider, you will see that both the original 1MB file exists as well as the updated 1MB file, so your total S3 usage is now 2MB, not 1MB.

- Versioning integrates with life-cycle management and supports MFA delete capability.

Cross-Region Replication (CRR):

- Versioning must be enabled to take advantage of Cross-Region Replication.

- Versioning resides under the Cross-Region Replication tab.

- Once Versioning is turned on, it cannot be turned off, it can only be suspended.

- For Cross-Region Replication (CRR), if versioning is enabled, clicking on the tab will now give you the ability to suspend versioning, and enable Cross-Region Replication.

- Cross-RegionDestination bucket must be created and again globally unique Replication (CRR) must be enabled on both the source and destination buckets in the selected regions.

- The destination bucket must be created and again globally unique.

- You have the ability to select a separate storage class for any Cross-Region Replication destination bucket.

- CRR does NOT replicate existing objects, only future objects meaning that only objects stored post turning the feature on will be replicated.

- Any object that already exists at the time of turning CRR on, will NOT be automatically replicated.

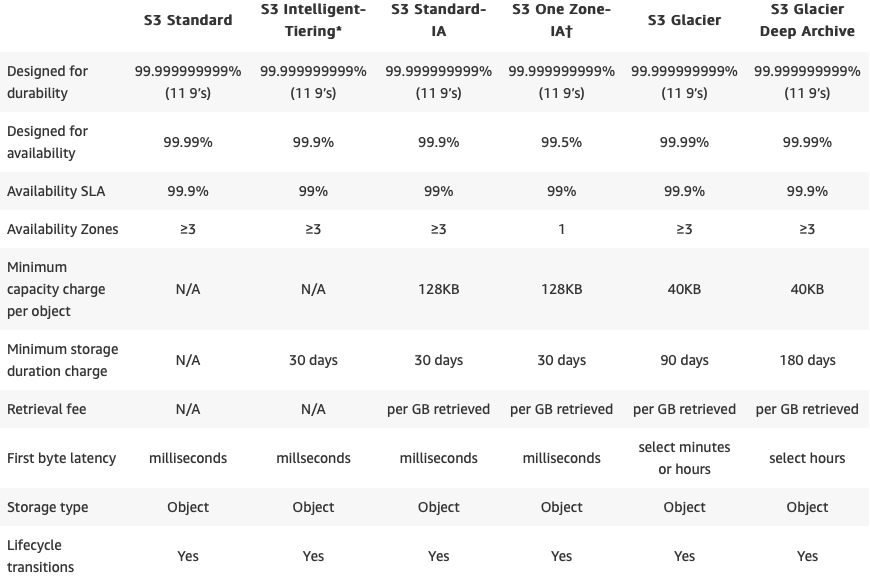

Storage classes:

S3 Standard:

- The default storage class. If you don’t specify the storage class when you upload an object, Amazon S3 assigns the STANDARD storage class.

- Stored redundantly across multiple devices in multiple facilities.

- Designed to sustain the loss of 2 facilities concurrently.

- 11-9’s durability, 99.99% availability.

Amazon S3 Intelligent Tiering:

- great fit for the customers when there is ambiguity around the access frequency of an S3 object.

- designed to optimize costs by automatically moving data to the most cost-effective access tier.

- There are no retrieval fees in S3 Intelligent-Tiering.

- 11-9’s durability, 99.9% availability.

S3 Standard-IA (Infrequently Accessed):

- For data that is accessed less frequently, but requires rapid access when needed

- Lower fee than S3 Standard, but you are charged a retrieval fee.

- Also designed to sustain the loss of 2 facilities concurrently

- 11-9’s durability, 99.9% availability

- S3 Standard IA has a minimum billable object size of 128KB

S3 ONEZONE_IA:

- stores the object data in only one AZ.

- data is not resilient to the physical loss of the AZ.

- 11-9’s durability, 99.5% availability.

S3 Glacier:

- Very cheap, Stores data for as little as $0.01 per gigabyte, per month.

- Optimized for data that is infrequently accessed. Used for archival only.

- It takes 3-5 hours to restore access to files from Glacier.

- Content of the archive is immutable, meaning that after an archive is created it cannot be updated.

S3 Glacier Deep Archive:

- Amazon S3’s lowest-cost storage class.

- long-term retention and digital preservation for data that may be accessed once or twice in a year.

Life-cycle Management:

- When clicking on Life-cycle, and adding a rule, a rule can be applied to either the entire bucket or a single ‘folder’ in a bucket.

- Rules can be set to move objects to either separate storage tiers or delete them altogether.

- It can be applied to the current version and previous versions.

- If multiple actions are selected for example transition from STD to IA storage 30 days after upload, and then Archive 60 days after the upload is also selected, once an object is uploaded, 30 days later the object will be moved to IA storage. 30 days after that the object will be moved to Glacier.

- Calculates based on UPLOAD date not Action data

- The transition from STD to IA storage class requires a MINIMUM of 30 days. You cannot selector set any data range less than 30 days.

- If STD->IA is set, then you will have to wait a minimum of 60 days to archive the object because the minimum for STD->IA is 30 days, and the transition to Glacier then takes an additional 30 days.

- Amazon S3 does not transition objects that are less than 128 KB to the STANDARD_IA or ONEZONE_IA storage classes because it’s not cost-effective.

- When you enable versioning, there will be 2 sections in the life-cycle management tab. 1 for the current version of an object, and another for previous versions.

- The minimum file size for IA storage is 128K for an object.

- It can set the policy to permanently delete an object after a given time frame.

- If versioning is enabled, then the object must be set to expire, before it can be permanently deleted

Data Consistency model:

- provide read-after-write consistency for PUTS of new objects.

- provide eventual consistency for overwrite PUTS and DELETES.

- provide eventual consistency for read-after-write HEAD or GET requests.

Static website hosting:

- To host a static website on Amazon S3, configure an Amazon S3 bucket for website hosting and then upload your website content to the bucket.

- URL structure: http://bucketname.s3-website-us-west-2.amazonaws.com

- you can access the bucket through the AWS Region-specific Amazon S3 website endpoints for your bucket.

Amazon S3 Transfer Acceleration:

- Transfer Acceleration takes advantage of Amazon CloudFront’s globally distributed edge locations. As the data arrives at an edge location, data is routed to Amazon S3 over an optimized network path.

- Utilizes the CloudFront Edge Network to accelerate your uploads to S3.

- Instead of uploading directly to your S3 bucket, you can use a distinct URL to upload directly to an edge location which will then transfer the file to S3.

- You might want to use Transfer Acceleration on a bucket for various reasons, including the following:

- You have customers that upload to a centralized bucket from all over the world.

- You transfer gigabytes to terabytes of data on a regular basis across continents.

- Transfer Acceleration must be enabled on the bucket. After enabling Transfer Acceleration on a bucket it might take up to thirty minutes before the data transfer speed to the bucket increases.

- To access the bucket that is enabled for Transfer Acceleration, you must use the endpoint bucketname.s3-accelerate.amazonaws.com

- You must be the bucket owner to set the transfer acceleration state.

- There is a test utility available that will test uploading direct to S3 vs through Transfer Acceleration.

- Transfer Acceleration URL is: bucket.s3-bucket.accelerate.amazonaws.com.

Cross-Origin Resource Sharing (CORS):

- Cross-origin resource sharing (CORS) defines a way for client web applications that are loaded in one domain to interact with resources in a different domain.

- To configure your bucket to allow cross-origin requests, you create a CORS configuration, which is an XML document with rules that identify the origins that you will allow to access your bucket.

Security:

Encryption:

2 types of encryption available in S3:

- In transit:

- Uses SSL/TLS to encrypt the transfer of the object.

- At Rest (AES 256):

- Server Side: S3 Managed Keys (SSE-S3).

- Server Side: AWS Key Management Service, Managed Keys (SSE-KMS).

- Server Side: Encryption with Customer provided Keys (SSE-C).

- Client-Side Encryption using client-side master key or KMS managed customer master key.

- You can set default encryption on a bucket so that all new objects are encrypted when they are stored in the bucket.

Bucket Policies:

- allows to add or deny permissions across some or all of the objects within a single bucket.

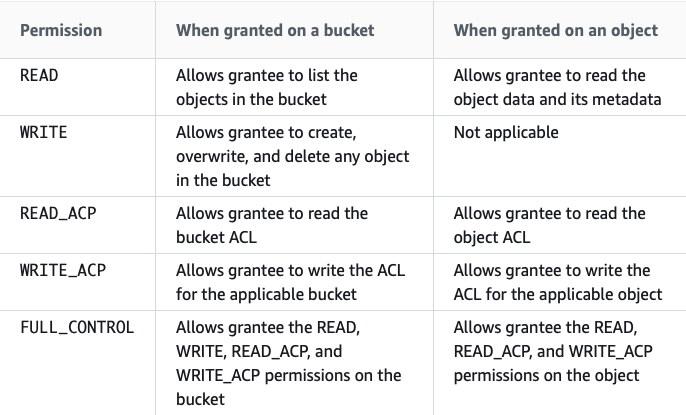

Access Control Lists:

- Amazon S3 access control lists (ACLs) enable you to manage access to buckets and objects from other AWS accounts.

- Each bucket and object has an ACL attached to it as a subresource.

- It defines which AWS accounts or groups are granted access and the type of access.

- When you create a bucket or an object, Amazon S3 creates a default ACL that grants the resource owner full control over the resource.

- The following table lists the set of permissions that Amazon S3 supports in an ACL

- Object ACLs are limited to 100 granted permissions per ACL.

IAM policies:

- Grant users permissions within your own AWS account to access S3 resources.

MFA delete:

- MFA delete adds an authentification layer to either delete an object version or prevent accidental bucket deletions and it’s content.

Monitoring:

CloudWatch:

- Monitor bucket storage using CloudWatch, which collects and processes storage data from Amazon S3 into readable, daily metrics (reported once per day).

- Monitor S3 requests, The metrics are available at 1-minute intervals and available at the Amazon S3 bucket level.

- Using Amazon CloudWatch alarms, you watch a single metric over a time period that you specify. If the metric exceeds a given threshold, a notification is sent to an Amazon SNS topic or AWS Auto Scaling policy.

CloudTrail:

- CloudTrail captures a subset of API calls for Amazon S3 as events.

- By default, CloudTrail logs bucket-level actions.

- You can also get CloudTrail logs for object-level Amazon S3 actions

Pricing:

- The rate you’re charged depends on your objects’ size, how long you stored the objects during the month, and the storage class.

- You pay for requests made against your S3 buckets and objects.

- You pay for all bandwidth into and out of Amazon S3, except for the following:

- Data transferred in from the internet.

- Data transferred out to an Amazon Elastic Compute Cloud (Amazon EC2) instance when the instance is in the same AWS Region as the S3 bucket.

- Data transferred out to Amazon CloudFront (CloudFront).

- You pay for the storage management features (Amazon S3 inventory, analytics, and object tagging) that are enabled on your account’s buckets

S3 best practices video from AWS

S3 Practice Questions:

S3 practice questions (Associate level).

Notice: we keep updating this material.

AWSBOY Cheat sheets:

You can report a mistake or suggest new points to add in this S3 cheat sheet…let us know in the comment section!

You need to log in to pass this practice exam.

You need to log in to pass this practice exam.

Accidentally came to this site. It turned me to voracious reader. All required information is in one place. Learners can have a quick glance. Request to add more topics. Tests are very useful.

Awesome resources! Thank you.

Excellent articles and very useful.

Heads-up: S3 Deprecation for path-styled URLs coming soon

https://aws.amazon.com/blogs/aws/amazon-s3-deprecation-plan-the-rest-of-the-story/